High Cost Tests and High Value Tests

Posted on February 22, 2017

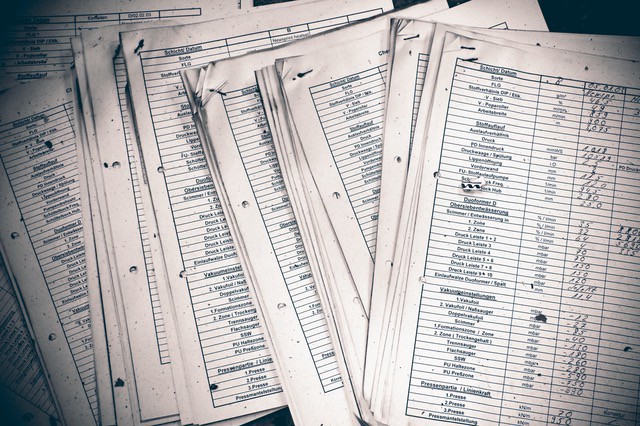

You probably don’t need an actual ledger to measure the costs and benefits of your tests

You probably don’t need an actual ledger to measure the costs and benefits of your tests

(This is a sidebar to an email course called Noel Rappin’s Testing Journal that you can sign up for here. It relates to the content of the email course, but didn’t quite fit in. If you like this post, you’ll probably also find the course valuable. You can also hear me discuss similar topics with Justin Searls and Sam Phippen on an episode of the Tech Done Right podcast.)

I often find myself referring to tests as “high-value” or “high-cost”. Which may lead you to the obvious question of what the heck makes a test high-value, since there’s not exactly a market where you can trade tests in for gold or something like that.

Let’s dig in to that. Tests have costs and tests have value.

The cost of a test includes:

- The time it takes to write the test

- The time it takes to run the test every time the suite runs

- The time it takes to understand the test

- The time it takes to fix the test if it breaks and the underlying code is OK

- Maybe, the time it takes to change the code to make the code testable.

The currency we are using here is time. Tests cost time. Eventually every programming decision costs time, when we talk about code quality making it easier or harder to make changes, we are inevitably talking about the amount of time it takes to change the code.

How can writing a test save time?

- The act of writing the test can make it easier to define the structure of the code.

- Running an automated test is frequently faster than manually going through elaborate integration steps.

- The test can provide efficient proof that code is working as desired.

- That test can provide warning that a change in code has triggered a change in behavior.

Both the cost and the value of the test are scattered over the entire lifecycle of the code. The cost starts right away when the test is written, but continues to add up as the test is read and executed. The cost really builds if the test fails when there is no underlying problem with the code. A fragile test can cost quite a lot of time.

The value can come when the test is written if the test enables you to write the correct code more quickly, as many TDD tests do. The value can also come by using the test as a replacement for manual testing, and the more time can be saved later on when the test fails in a case where it indicates a real problem with new code.

For example, the Shoulda gem provides a bunch of matchers that are like should belong_to :users — that’s the whole test. This test is low-cost: it takes very little time to write, is easy to understand, and executes quickly. But it’s low-value — it’s tied to the database, not the code logic, and so doesn’t say much about the design of the code. It’s also very unlikely to fail in isolation — if the association with users goes away it’s likely that many tests will fail, so the failure of this test probably isn’t telling you anything about the state of the code that you don’t already know.

Conversely, imagine an end-to-end integration test written with Capybara that walks through an entire checkout process. This test is likely to be high-cost. It might require a lot of data, a number of testing steps, and complex output matching. At the same time, the test could be high value. It might be your only way to find out if all the multiple different pieces collaborating in the checkout are communicating correctly, which is to say a test like this might find errors that no other test in the system will flag. It might also be faster to run the test by itself when developing then trying to walk through the checkout manually.

So the goal in keeping your test suite happy over the long-haul is to minimize the costs of tests and maximizing the value. Which I realize you probably know, but explicitly thinking in these terms can be helpful.

Some concrete steps you can take include:

- Instead of thinking about what will make a test pass, think about what will make it fail. If there’s no way to make the test fail that won’t make an already existing test fail, maybe you don’t need the test.

- Think of integration tests that will help you automate a series of actions that are useful while developing the code, so you get time savings from running the integration test rather than using manual tests.

- For unit tests, write the test so that it invokes the minimal amount of code needed to make the test fail. For example, error cases often can be set up as narrowly-focused unit tests with test doubles rather than much slower and harder to set up integration tests.

- Try to avoid high-cost activities like calling external libraries, saving a lot of objects to a database. In testing, a good way to avoid this is to use test doubles to prevent unit tests from having to use real dependencies.

- If you find that a single bug makes multiple tests fail, think about whether all those tests are needed. If the failure is in setup, think about whether all those tests need all that setup.

- Sometimes, tests that are useful during development are completely superseded by later tests, these tests can be deleted.

I find it does help improve your testing if you think about both the short-term and long-term cost of tests as you write them. And improving your testing can also improve your code.

(Thanks. If you like this post, you probably also like my Testing Journal, please sign up and let us know what you think. You can also hear me discuss similar topics with Justin Searls and Sam Phippen on an episode of the Tech Done Right podcast.)